22 July 2020

Brainchip, an Australian company, has innovated silicon technology capable of decentralizing AI processing from large data centers to mobile and IoT devices.

AI's significance lies in its ability to rapidly process complex data for cognitive functions like understanding and decision-making, far outpacing human capability. It excels in identifying patterns within vast or intricate datasets, which is instrumental in a wide variety of applications from search engines, fraud detection, medical diagnostics, through to industrial maintenance diagnostics.

Traditionally, AI operations have relied on substantial data center infrastructure, but Brainchip's technology paves the way for more versatile and widespread AI deployment.

The world's first commercially available neuromorphic processor

What make Brainchip's technology special however, is that it is modeled on the structures and processing methods of the human brain. This radically different approach promises high-performance, small footprint, and ultra energy efficient edge applications.

This new technology called "Akida™" will have dramatic ramifications by spawning the development of a new breed of smart devices including robots.

The Terminator CPU was a neural network processor

A pivotal plot element in the “Terminator” movie series is the fictional Cyberdyne Corporation responsible for developing the self-aware Skynet military command and control system that went rogue and set about killing-off the human race.

The first movie (released in 1984) and sequels tell the story of the Computer Scientist (Miles Dyson) whose work provided the technology that powered Skynet. Dyson developed his neural network processor by reverse engineering the CPU from the first terminator (played by Arnold Schwarzenegger) that traveled back through time. The Terminator was crushed in a hydraulic press in a factory, leaving intact only a robot arm and the fabled broken silicon chip - the Terminator's Neural Network CPU - a gift from the future.

Miles Dyson said of the chip "It was a radical design, ideas we would never have thought of..."

40 years ago, when Terminator 1 was released, a neural network based CPU was pure futuristic fantasy.

Neuromorphic processor - from science-fiction to reality

It seems we have arrived at the future. Australian company Brainchip Holdings is about to launch the first of a series of chips supporting brain-like neural processing that will likely change the world.

The new chip called Akida™ is designed to learn and adapt.

This could be a Skynet moment.

Artificial intelligence requires processing grunt

So what's the fuss? Why is the development of a neuromorphic processor important and what difference can it make to the processing of artificial intelligence workloads?

To understand this we need to first understand artificial intelligence and compare the way existing computers process AI and then understand why the human brain does it so much better and more efficiently.

Artificial Intelligence is deep learning and decision making based on finding patterns in large scale data.

The data can be anything from financial transactions, audio signals, packet data traffic through routers, pictures, words – anything that can be transmitted, processed, or stored.

Powerful insights don’t come from hard-edged exact matches, but from fuzzy imperfect matches.

As one example, speech recognition requires tolerance for natural tonal variation, mispronunciations, sloppy grammar, stuttering, repetition and other speech flaws. Traditional computing, developed over 60 years and part of our everyday lives is based on precise matching. 1 + 1 always equals 2.

Finding close but not exact matches using computers built for unambiguous precision requires statistical calculations resulting in billions of high-speed computations. The computing power needed just can’t fit in small packages or powered by small batteries.

But somehow, animal and human brains do it effortlessly.

And herein lies the motivation for pursuing neuromorphic computing.

If a brain the size of a bird can outperform server farm computers in many useful vision, sound, motion, and other sensor processing tasks - using vastly less energy, occupying vastly less volume, and do it "on the fly" (see what I did there?) then perhaps we should understand the method.

Neuromorphic hardware mimics brain methods to vastly increase AI computational capacity in a much smaller package using much less power.

Brainchip's Akida™ performs AI in a single chip and requires only fractions of 1 watt to operate. It is highly energy efficient.

AI at the edge compared with AI processed in the cloud

The principal difference is where the compute to achieve AI takes place. The vast majority (I am guessing over 90%) of AI is processed in data centres not on the local device where the data has been acquired.

"The edge" is a term to describe devices that interface with the real world - like facial and object recognition cameras, sensors that "smell", audio sensors, industrial sensors and others.

An iPhone is a ubiquitous example of an edge device, but in industry it could be (for example) a vibration analyzer, a video camera that scans objects on a conveyor belt, or an artificial nose. But, in reality there are literally thousands of use cases for Edge AI.

AI at the edge means analyzing sensor data at the point of acquisition avoiding transmission to cloud based data centres.

And that's a game changer because sending data back through the internet to servers that undertake the heavy AI processing has obvious limitations.

Data connections are not always available nor reliable and link speed prevents real time results. And there are serious concerns that future bandwidth, even with the additional capacity provided by 5G networks, will be swamped by the proliferation of smart IoT devices attempting connection to cloud computing .

For collision avoidance or steering around landscape features, decision making must be instant.

The fact that Akida™ can replace power hungry and comparatively large servers (for AI applications) with one chip consuming less than 1 watt illustrates the energy efficiency of neural network processing and provides clues as to why the human brain is so powerful.

Compact high speed neuromorphic hardware consuming low power enables powerful functionality in small portable devices. Low cost means large scale deployment of these devices.

Industrial sensors - power efficient edge AI use cases.

Sensors are devices that represent real world events using electrical signals.

We are familiar with simple sensors that detect (for example) voltage, current, resistance, temperature, linear or rotational speed.

However, complex sensors such as video cameras, vibration sensors, microphones, finger-print touch pads, and even olfactory (smell) sensors generate high volumes of data requiring serious computing grunt for analysis to produce actionable information.

Real time decision making is needed for autonomous vehicle control where streaming sensor data (from Lidar, Video, 3D Accelerometers, and others) is generated from modelling the landscape into which the vehicle is moving. Reliably detecting the DIFFERENCE between shadows from overhead tree foliage, or a bird flying directly at the vehicle COMPARED TO a pedestrian is a non-trivial problem.

The challenge is that objects are almost never identically represented so inference systems need wide tolerance. An object may be viewed at different angles, different magnifications, different size and position within the viewing frame, and different lighting - but must still be recognized as the same object.

Pattern recognition must detect only distinguishing features and not rely on pixel perfect matches.

Traditional computer systems (Von-Neumann) solve the problem through brute force; a neuromorphic processor applies a radically different architecture.

Brainchip: Replicating the human brain in silicon

The key to understanding Brainchip and neuromorphic computing is to look at how mammalian brains solve the problem of fast and efficient pattern recognition; there are five key principals...

- When it comes to patterns, near enough is good enough

- Simplifying through modelling and abstraction

- We don’t live in the real world, but something near to it

- We only process what’s different, we ignore sameness

- Memory and processing happen in the same place

Let's examine each of these principles...

0. For Brains - patterns are EVERYTHING

Appreciating the nature of intelligence requires understanding the importance of patterns.

"Patterns" in the broadest sense; we are not talking checks, stripes, or polka-dots.

But, we are talking about (say) "I walked into the room and I immediately sensed that something was out of place."

The brain is a device that stores (learns) patterns and later matches them to new data, and updates or identifies new patterns; getting smarter through experience.

Humans start loading patterns acquired from our senses from before birth. By the time we are five we have accumulated enough data and language to support accelerated learning. We build on foundations. Starting with simple "real world" knowledge, then increasingly abstract concepts to construct a skeleton to support the flesh of knowledge.

While this sounds allegorical, such knowledge structures actually build the neurological pathways to reach stored information.

Humans identify patterns in everything: language, math, facial recognition, personalities, situations, pain, movement - every piece of data that passes through our nervous system and brain is processed looking for and responding to patterns. It's the way we perceive the world and make decisions.

The word 'experience' is another way of saying 'accumulated a lot of patterns'.

Pattern recognition is not done by choice; it is automatic and fundamental for thought.

1. When it comes to patterns, near enough is good enough

The genius of the way humans process information is our pattern matching method is highly tolerant of ambiguity. Pattern recognition need not be hard edged exact matches, we both identify patterns but also have a sense of how well matched they are.

Hence, words like "hunch", "intuition", and "Déjà vu" feature in our vernacular.

Experienced drivers will observe subtle changes in driving behaviors, car maneuvers, speed variations and immediately sense potential danger and be on the brakes before they can even think "what is it?"

We look at line cartoon drawings and recognize relatives, friends, politicians, and actors.

Further, patterns from one domain are recognized in another. Observing abstract art, a person might recognize similarities to signs of the zodiac. We look at the stars and see saucepans and animals.

This cross-domain pattern matching is a key element in creativity and problem solving.

Animals and humans automatically search for patterns in everything, we can't help but do it - it's hard wired.

Higher intelligence is the greater capacity to notice useful patterns and the ability to match concepts across frameworks.

In the quest for low powered high performance AI suitable for edge applications, the obvious path is to work out how the brain does it. Brainchip has studied the brain and its data processing methods. While many others have been working on it...

Brainchip has successfully translated these learnings from the wet organic world of the human brain and applied them to the dry silicon world of electronics.

2. Simplifying through modelling and abstraction

The secret to improving AI computing power with power efficiency is to not only throw more grunt at the problem but to also improve the method. The human brain does this not be churning through all of the captured raw data but by rejecting the meaningless rubbish and extracting the pieces that matter.

The human brain is compact with low power consumption (comparatively) but punches above its weight in processing grunt. This is achieved by abstracting information (simplifying it) to reduce the workload.

Even though we think we “see”, “hear”, “taste”, “smell” and “feel” the world clearly – our brains convert signals received from our senses into simplified and abstract data.

A lot of automatic pre-processing takes place in the brain for all sensory inputs before our conscious receives the information.

Vision for example is automatically corrected to straighten lines distorted by the eye (the eye is actually a very poor camera). Visual data is compensated for the fact that only a tiny part of the central vision is high resolution. And the eyes themselves each split the field of vision into separate data streams representing left field and right fields of view, and further down the preprocessing stream, the brain mixes the two streams together. Thus, through brain trauma, it is possible to totally lose the left or right fields of vision even though both eyes function perfectly.

Further, your brain incorporates information from other senses to "make sense” of the data the vision system has captured. For example, the brain knows from experience that the world is right way up. We have difficulty making visual sense of the world when it is upside down or the image defies physical laws. The sky is almost always at the top and water is almost always at the bottom, trees grow up not sideways or down.

Modelling and simplification is critical to reducing complex data to a form that can be stored, classified, and retrieved using simple and efficient methods.

3. We don’t live in the real world, but something near to it

The implication is both fascinating and scary; we don't actually see the world as it is.

Through prior learning and experience we build visual models of the physical world around us, and social models of people, stereotypes, and how we should respond to social interactions and situations. Behavioral scientists call this "our scripting."

Visual models are particularly important to enable rapid assessment and decision making. When scenes don’t conform to our visual models, the preprocessing system is temporarily thwarted, and our brains need to adjust to the new reality.

Further, during this preprocessing the brain predicts what is important and discards irrelevant information. Our senses are continually filtered to only deliver to our conscious information deemed relevant.

We don't just filter reality; we construct it, inferring an outside world from an ambiguous input.

Anil Seth, a neuroscientist at the University of Sussex, explains that we routinely fabricate our world view before all the facts are in, and most often base decisions on scant real data. We rely on a vast database of models to assist the construction of our own version of reality. We make assumptions.

Fun fact: In Air crash investigations, the eye-witness testimony of younger people is considered more credible than older people because they have less life experience upon which to fabricate a new version of events based on what they think they saw rather than what they actually saw (leaving aside vision problems). This is related to the perception that older people are perceived to think more slowly where the true explanation is they have far more life data to search through to find patterns.

The reason we turn grey as we grow older is because we no longer see the world in black and white.

While the above examples are visual, the same applies to all information that we process - sight, smell, touch, hearing, and balance. But, also in language and our world view. Prejudice comes from having existing experience and models. Hence, what was said before. We judge before all the facts are in.

Seth and the philosopher Andy Clark, a colleague in Sussex, refer to the predictions made by the brain as “controlled hallucinations”. The idea is that the brain is always building models of the world to explain and predict incoming information; it updates these models when prediction and experience obtained from sensory inputs disagree.

A model is a smaller, simpler, representation of a real thing. Modelling is a data processing method aimed at making useful predictions from scant data

This is the trade-off for high-speed decision making necessary for effective operation. The explanation is probably in evolution; those that acted on the assumption that it was a tiger outlived those that stood there thinking "hang on, is that really a tiger?"

Sometimes we get it wrong. This is a potential problem when applying the new world of AI to things like autonomous vehicle control. We accept humans having accidents but seem to have zero tolerance when driver-less vehicles do the same (even if the accident rate is orders of magnitude better).

Delivering Processing speed from a compact, power efficient package is the objective of both organic brains and neuromorphic computing; even if that means sometimes making an error.

The human mind is a device that models the real world, and neuromorphic computing is based on replicating this abstraction and simplification.

The human brain is inherently sparse in its approach to data processing; stripping out redundant information to increase speed and reduce storage overhead

It seems that brevity truly is the soul of wit

4. We only process what’s different, we ignore sameness

Spike neural processing - radical architecture

Neuromorphic processing is based on mimicking the sparse spiking neuronal synaptic architecture and organic self-organization of the human brain.

Easier said than done, and Brainchip has been working on it for over two decades.

The ability to capture masses of data, discern the salient features, and later to instantaneously recognize those features – is central to high speed decision making. The sense of this can be summed-up in a familiar phrase we have all uttered “I’ve seen this somewhere before”.

"Salient features" is the operative term in the above paragraph. This introduces the concept of Spike Neural Processing and from where the name "Akida" derives. Akida is Greek for spike. Whereas brute force AI (using traditional Von Neumann architecture) must process all data looking for patterns, brains cut the workload down through spiking events where a neuron reaches a set threshold in response to new sensor data before transmitting a signal to a cascading sequence of subsequent neurons (a neural network).

The neural spike thresholds are the triggers for recognizing the salient features.

Simplistically, the difference between traditional architecture based AI processing and neuromorphic AI processing is this...

The traditional computing approach employs very high speed sequential processing. It's like checking to see if a library has the same book by retrieving every book in the library one at a time and comparing it to the one in your hand.

A library search based on neural network principals would work very differently. As you walked into the library, a person might say "Fiction books are all in the back half of the library." This is analogous to a spiking event. Upon arriving there, another person would direct you to the shelves matching the first letter of the author's surname. The second spiking event. Now the search is narrowed to just a few shelves. Finally, a 3rd person says "I know this book, I think you will find it on the last shelf on the right." Finally, you see amongst the books one of a similar size and color. Pulling it out from the shelf, you confirm the match.

The time difference and energy required between the two search methods is huge.

But! Consider this. What if the first person said "That's a fiction book. This library only stocks non-fiction." Search over.

The sequential search method could only reach that conclusion after checking every book in the library. And that is where truly enormous time and power savings are made. The vast majority of AI processing involves data that contains nothing of interest; you have to sift a lot of dirt to find the gold. Neuromorphic artificial intelligence is highly efficient at ignoring irrelevant data.

The flaw in my library book analogy is that books and libraries provide efficient search methods through a universal classification system. However, neuromorphic methods classify and store data of any type on the fly through detecting salient features, building a searchable library about anything.

And in any case, if libraries did have people present who could reliably point you in the right direction as described above, the search method described would be miles faster than first using the catalogue - computerized or not.

The neuromorphic approach feeds data to be processed into a cascading network of neurons that are connected in a pattern analogous to the salient features of the stored item. Individual neurons spike when a match is detected in a salient feature. The propagation of electrical signals through this network rapidly spreads out exponentially in a massively parallel fashion. Electrical signals propagate at roughly 90% of the speed of light.

However, Akida™ only processes data that exceeds a spike threshold (set through learning). If an event in the spike neural network fails to exceed the threshold, propagation past that point ceases. Vastly less work is done, thus increasing pattern matching speed and saving power.

Neural processing through spiking events will identify a pattern match orders of magnitude faster than traditional computing methods and with far greater electrical efficiency.

5. Memory and processing happen in the same place

A big difference between traditional computer (Von Neumann architecture) and brain architecture (replicated in neuromorphic computing) is that MEMORY and PROCESSING happen in the same place. A Von Neumann machine stores data separately, and retrieves it from memory, routes it through the central processor and then sends results back to memory. Memory calls are usually the biggest bottle neck (impediment to speed) in any traditional computer.

It is possible for a Von Neumann machine to emulate neural networks. But, Neural network processors that could be classed as "neuromorphic" are in the minority.

In strictly "neuromorphic computing", both processor and memory are combined. Human brains are MASSIVELY parallel in their processing.

Using the library analogy, searching for a book using Von Neumann architecture you would not enter the library (memory) at all. The search would be orchestrated from outside of the library by an agent (CPU) that managed the sequential retrieval of books, comparing each to the search target until either a match is made or the end is reached. That's how traditional computing works.

You can appreciate therefore that traditional AI pattern matching methods that need to retrieve thousands (often millions) of words of information from core memory (or worse, hard-disc storage) will be both much slower and consume vastly more electrical power.

Processing spike events is a radically different architecture, as the spiking occurs within the memory bank. Processing is to a large extent decentralized and occurs throughout the brain. You don't retrieve from memory; electrical signals propagate through it.

This delivers an enormous speed gain and power efficiency when looking to find patterns.

Thus both human brains and Akida™ only process the spike events, and process them in parallel (simultaneously) which dramatically cuts the workload, speeds response time dramatically, and reduces power consumption.

Learning is about setting and fine tuning spike thresholds and setting-up a pattern of links to other neurons. This is how the brain operates and Akida™ mimics this structure in the nueromorphic fabric at the core of the chip.

Even as I write this, I realise the above explanation is a bit like explaining motor cars as simply four tyres and a steering wheel. Obviously, the devil is in the detail.

Suffice to say Brainchip has worked the problem and the result is Akida™.

Machine learning on neuromorphic processors

Artificial Intelligence introduces the topic of machine learning.

It works like this. Instead of programming a video device to recognise a cat (for example), you point the device at a series of cats from various angles and tell the machine "these are all cats." Such learning usually also includes similar sized animals such as small dogs and rabbits "by the way, these are NOT cats" (this is called supervised learning - Akida™ is capable of unsupervised learning).

The learning method identifies the salient and differentiating features, from then on the machine will recognise cats on its own.

Such a device could be used in deluxe cat doors that let your cat through but keeps other neighborhood moggies out (and for the USA market - shoots them on sight). Today, video recognition exists, but for a cat door would be stupidly expensive. But, at $10 to $20 per chip, Akida™ will make such applications commonplace. Akida™ can also receive direct video input to process pixels on chip.

Human brains do not store pixel perfect images, they store abstractions - the minimum amount of data points needed to support future pattern recognition. Akida™ replicates this approach in silicon. Human vision is diabolically clever only having sufficient resolution to discern high detail in a small area at the centre of the visual field ( a small area of the retina called The Macular). Apparent clarity and color depth across the entire visual field is a brain generated illusion. This dramatically cuts down data, and abstraction within the brain cuts it down even further.

The analogy would be comparing a full color picture of a person's face to a very simple sketch with minimal lines - easily recognizable as the same person. But, the sketch requires vastly less work to draw. Interestingly, we see this all the time with political cartoonists who draw satirical pictures. Even grossly distorted cartoon versions of a person (caricatures) are easily recognizable. Clearly the brain doesn't rely on exact matching.

Recognising objects is nothing new in the world of AI - however, doing it "at the edge" (i.e within a small low powered device not connected to a power hungry server in the cloud) is revolutionary. And further, Akida™ is capable of learning and inference at the edge. That suggests supporting the ability for edge devices to self-learn and improve through "experience."

With a truly bewildering array of off-the-shelf sensors already on the market, the development of astoundingly intelligent and useful enhancements will soon proliferate by including within the sensor package a nueromorphic processor like Akida™.

This will enable the sensor to, not just output data, but output a decision.

Hybridised sensors will also emerge that look for patterns in the data generated by multiple sensors. This is after all, what the human brain does. Taste, for example, is greatly dependent on smell and is influenced by visual appearance.

For building truly autonomous human like robots – this is the missing link.

Artificial intelligence Narrow AI vs. General AI

Humanoid robots is just one of thousands of potential applications.

However, mischievously I have taken a bit of creative licence here. Talking about humanoid robots connotes autonomous thinking, self-direction, and perhaps even artificial consciousness (but, if something is conscious – is its consciousness artificial?).

Robo-phobic thinking inculcated through years of imaginative fiction leads us to expect such Robots might exceed their brief to the detriment of humanity.

No need to panic, Akida™is not in this ballpark.

This is where the reader may wish to research the difference between Narrow AI and General AI. Akida™is designed for task specific (or narrow) AI where its cleverness is applied to fast learning and processing for very specific useful applications.

While it does apply brain architecture, Neuromorphic computing is nowhere near capable of emulating the power of the human brain. Although, it could well be the first crack of the Pandora lid.

However, Akida™ could be applied to helping humanoid robots maintain balance, control their limbs and navigate around objects. It could also be used for very narrow autonomy like...

IF [Floor is Dirty] AND [Cat Outside] THEN START [Vacuuming Routine]

Robotics is certainly an obvious field of application for Akida™, but probably more for robots that look like this...

On their website Brainchip has identified the obvious applications of this new technology such as: person-type recognition (businessman versus delivery driver for example), hand gesture control, data-packet sniffing (looking for dodgy internet traffic), voice recognition, complex object recognition, financial transaction analysis, and autonomous vehicle control.

One can't help thinking that this list is mysteriously modest.

Broad spectrum breath testing - hand-held medical diagnostics

They is also mention of olfactory signal processing (smell) suggesting use for devices that can identify the presence of molecules in air. Such "bloodhound" devices could be deployed for advanced medical diagnostics that could identify an extraordinary range of diseases and medical conditions simply through sampling a person's breath.

The impact on the medical diagnostics industry could be, er - breath taking.

On 23 July 2020 the company announced that Nobel Prize Laureate Professor Barry Marshall has joined Brainchip's scientific advisory board. Prof. Marshall was awarded the Nobel Prize in 2005 for his pioneering work in the discovery of the bacterium Helicobacter pylori and its role in gastritis and peptic ulcer disease. The gold-standard test for Helicobacter pylori involves analysing a person's breath.

Detection of endogenous (originating from within a person, animal or organism) volatile organic compounds resulting from various disease states has been one of the primary diagnostic tools used by physicians since the days of Hippocrates. With the advent of blood testing, biopsies, X-rays, and CT scans the use of breath to detect medical problems fell out of clinical practice.

The modern era of breath testing commenced in 1971, when Nobel Prize winner Linus Pauling demonstrated that human breath is complex, containing well over 200 different volatile organic compounds.

Certainly works for Police breath testing to measure blood alcohol levels.

Sensing and quantifying the presence of organic compounds (and other molecules) using electronic olfactory sensors feeding into an AI processing system could be the basis for low-cost immediate medical diagnostics assisting clinicians to more quickly identify conditions warranting further investigation and providing earlier indications long before symptoms are apparent.

[March 2021 update]

Semico published an article in their March, 2021 IPI Newsletter detailing a press release between NaNose (Nano Artificial Nose) Medical and BrainChip announcing a new system for electronic breath diagnosis of COVID-19. This system used an artificially intelligent nano-array based on molecularly modified gold nanoparticles and a random network of single-walled carbon nanotubes paired with the Akida neuromorphic AI processor from BrainChip.

Since then, NaNose has broadened the spectrum of diseases that can be detected and is working toward commercial release...

But, who knows what other applications will emerge when seasoned industrial engineers get their hands on Akida™?

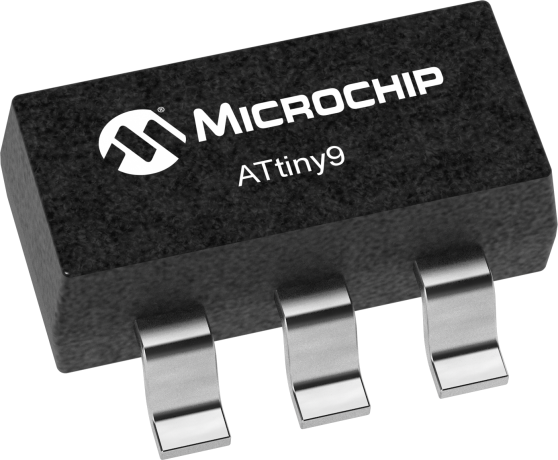

History shows the launch of a first generation chip leads to rapid development

As a moment in the history of technological achievement, the launch of Akida™ could be likened to the launch of Intel's 8008 microprocessor in 1972, the first 8 Bit CPU that spawned the development of a new generation of desktop computing, embedded device control, Industry 3.0 automation and the ubiquity of the internet.

The microprocessor enabled practical computing at the edge. No need to submit data to a mainframe sitting in a large air-conditioned room tended to by chaps in white coats, and waiting hours or days for results to come back.

The Atmel ATTINY9-TS8R Microprocessor costs around USD$0.38

Nearly forty years later, we know how that has turned out. Barely a device exists that doesn't have at least one microprocessor chip in it. Recent estimates suggest the average household has 50 microprocessors tucked away in washing machines, air-conditioning systems, motor vehicles, game consoles, calculators, dish-washers and almost anything that has electricity running through it.

The clock speed of the 8008 back in 1972 was a leisurely 0.8 MHz and the chip housed 3,500 transistors. 40 years later the 8th generation Intel chips run at clock speeds 5,000 times faster and house billions of transistors.

Price has been a key enabler for market proliferation. Putting a microprocessor in a washing machine (instead of an electro-mechanical cam wheel timer) is feasible because the microprocessor chip costs a few cents and is quicker and simpler to factory-program, orders of magnitude more reliable, and delivers far greater functionality (although most of us wash clothes on the same settings all the time).

Akida™ could choose the optimum settings based on scanning the clothes, weighing them, and perhaps also even smelling them.

Machine contains 4.5 kg of ALL WHITES --> Add 35 ml of bleach.

The game changing significance of Brainchip's work cannot be overstated.

AI at the edge will dramatically improve the performance of artificial limbs.

Akida™ will soon be the Model-T of neuromorphic processors

The most exciting aspect of Brainchip's work is the pioneering nature of the technology.

A reminder, the model-T disrupted the automotive world through introducing production line manufacturing; it introduced car ownership to the average person. However, quite quickly the Model-T was improved upon and subsequent models were light years ahead. Great though Akida™ currently is, it is only the beginning.

The launch of the first microprocessors in the seventies put low cost data processing in to the hands of teenagers. Kids started building computers in their bedrooms and garages to become Microsoft and Apple.

Akida™ will provide the initial Artificial Intelligence building blocks for the next generation. Brainchip haven't just created a chip they have enabled an ecosystem through making available a free software suite of development tools.

Brainchip has a new breed of engineers and scientists who have steeped themselves deeply in neuromorphic methods. They have opened the door into a whole new technological landscape.

While we think Akida™ is exciting, it's what comes after that will be truly amazing.

The world is about to change fast.

Further reading

- Brainchip Inc. official website

- Neuromorphic Chip Maker Takes Aim At The Edge [The Next Platform]

- Spiking Neural Networks, the Next Generation of Machine Learning

- Deep Learning With Spiking Neurons: Opportunities and Challenges

- How close are we to a real Star Trek style medical Tricorder? [Despina Moschou, The Independent]

- If only AI had a brain [Lab Manager]

- Intel’s Neuromorphic Chip Scales Up [and It Smells] [HPC Wire]

- Neuromorphic Computing - Beyond Today’s AI - New algorithmic approaches emulate the human brain’s interactions with the world. [Intel Labs]

- Neuromorphic Chips Take Shape [Communications of the ACM]

- Why Neuromorphic Matters: Deep Learning Applications - A must read.

- The VC’s Guide to Machine Learning

- What is AI? Everything you need to know about Artificial Intelligence.

- BRAINCHIP ASX: Revolutionising AI at the edge. Could this be the future SKYNET? [YouTube Video - a very good summary]

- In depth analysis of Brainchip as a stock [with discussion about the technology] [YouTube Video]

- Intel’s Neuromorphic System Hits 8 Million Neurons, 100 Million Coming by 2020 [The Spectrum]

- Bringing AI to the device: Edge AI chips come into their own TMT Predictions 2020 [Deloitte Insights]

- Inside Intel’s billion-dollar transformation in the age of AI [Fastcompany]

- Spiking Neural Networks for more efficient AI - [Chris Eliasmith Centre for Theoretical Neuroscience University of Waterloo], really fantastic explanation - Jan 21, 2020 (predates launch of Akida)

- Software Engineering in the era of Neuromorphic Computing - "Why is NASA interested in Neuromorphic Computing?" [Michael Lowry NASA Senior Scientist for Software Reliability]

- Could Breathalyzers Make Covid Testing Quicker and Easier? [Keith Gillogly, Wired - 15 Sept 2020]

- Artificial intelligence creates perfumes without being able to smell them [DW Made For Minds]

- Epileptic Seizure Detection Using a Neuromorphic-Compatible Deep Spiking Neural Network [Zarrin P.S., Zimmer R., Wenger C., Masquelier T. [2020]]

- Flux sur Autoroute, processing d’image [Highway traffic, image processing] [Simon Thorpe CerCo (Centre de Recherche Cerveau & Cognition, UMR 5549) & SpikeNet Technology SARL, Toulouse]

- Exclusive: US and UK announce AI partnership [AXIOS] 26 September 2020

- Breath analysis could offer a non-invasive means of intravenous drug monitoring if robust correlations between drug concentrations in breath and blood can be established. From: Volatile Biomarkers, 2013 [Science Direct]

- Moment of truth coming for the billion-dollar BrainChip [Sydney Morning Herald by Alan Kruger - note: might be pay-walled]

- Introducing a Brain-inspired Computer - TrueNorth's neurons to revolutionize system architecture [IBM website]

- Assessment of breath volatile organic compounds in acute cardiorespiratory breathlessness: a protocol describing a prospective real-world observational study [BMJ Journals]

- What’s Next in AI is Fluid Intelligence [IBM website]

- ARM sold to Nvidia for $40billion [Cambridge Independent]

- Interview with Brainchip CEO Lou Dinardo [16 September 2020] [Video: Pitt Street Research]

- BrainChip Holdings [ASX:BRN]: Accelerating Akida's commercialisation & collaborations [1 September 2020:Video: tcntv]

- BrainChip Awarded New Patent for Artificial Intelligence Dynamic Neural Network [Business Wire]

- BrainChip and VORAGO Technologies agree to collaborate on NASA project [Tech Invest]

- Intel inks agreement with Sandia National Laboratories to explore neuromorphic computing [Venture Beat - The Machine - Making Sense of AI]

- Congress Wants a 'Manhattan Project' for Military Artificial Intelligence [Military.com]

- Future Defense Task Force: Scrap obsolete weapons and boost AI [US Defense News]

- Is Brainchip A Buy? [ASX: BRN] | Stock Analysis | High Growth Tech Stock [YouTube - Project One]

- Is Brainchip Holdings [ASX: BRN] still a buy after its announcement today? | ASX Growth Stocks [YouTube ASX Investor]

- Hot AI Chips To Look Forward To In 2021 [Analytics India Magazine]

- Scientists linked artificial and biological neurons in a network — and it worked [The Mandarin]

- Scary applications of AI - "Slaughterbot" Autonomous Killer Drones | Technology [YouTube Video]

- BrainChip aims to cash in on industrial automation with neuromorphic chip Akida [Tech Channel - Naushad K. Cherrayil]

- How Intel Got Blindsided and Lost Apple’s Business [Marker]

- Neuromorphic Revolution - Will Neuromorphic Architectures Replace Moore’s Law? [Kevin Morris - Electronic Engineering Journal]

- Insights and approaches using deep learning to classify wildlife [Scientific Reports]

- Artificial Neural Nets Finally Yield Clues to How Brains Learn [Quanta Magazine]

- Rob May, General Partner PJC Venture Capital Conversation 181 with Inside Market Editor, Phil Carey - discuss edge computing on YouTube.

- Why Amazon, Google, and Microsoft Are Designing Their Own Chips [Bloomberg Businessweek: Ian King and Dina Bass]

- "Intelligent Vision Sensor" [You-Tube video - Sony]

- What is the Real Promise of Artificial Intelligence? [SEMICO Research and Consulting Group]

- NaNose Medical and BrainChip Innovation [SEMICO - Rich Wawrzyniak]

- NaNose - a practical application of Akida to detecting disease through breath analysis [You-Tube Video]

- Nanose company website

- What is the AI of things [AIoT]? [Embedded]

- Brainchip Holdings Ltd [BRN] acting CEO, Peter van der Made Interview June 2021 [YouTube]

- Spiking Neural Networks: Research Projects or Commercial Products? [Bryon Moyer, technology editor at Semiconductor Engineering]

- Our brains exist in a state of “controlled hallucination” [Insider Voice]

- BrainChip Launches Event-Domain AI Inference Dev Kits [EETimes: Sally Ward-Foxton]

- New demand, new markets: What edge computing means for hardware companies [McKinsey & Company]

- Brainchip - Where to next? An investment perspective [Youtube Video]

- Self-Learning Neuromorphic Chip Market To Grow At USD 2.76 Billion at a CAGR of 26.23% by 2030 - Report by Market Research Future [MRFR]